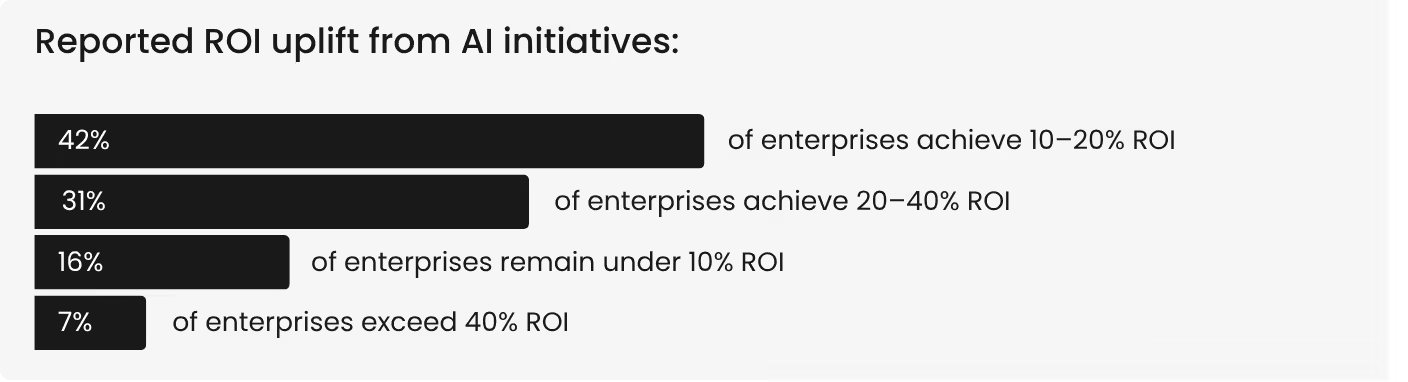

Four in five enterprises report measurable productivity gains from AI. Weekly usage has crossed 85%. Budgets are increasing. And yet, across our survey of 255 enterprise leaders, only 7% exceed 40% ROI from their AI investments. The rest sit between 10% and 20%, generating enough return to survive budget reviews but not enough to reshape the business.

McKinsey's 2025 Global AI Survey tells a similar story at scale. 88% of organizations now use AI in at least one business function, but only 39% report any enterprise-level EBIT impact. Most of those estimate the impact at less than 5% of total EBIT. The technology is working, but the returns aren’t following.

This isn't a capability gap. It’s an execution gap. And it shows up in the same five places across nearly every enterprise AI program that underperforms. The good news is every one of these mistakes is correctable. The bad news is that most organizations don’t realize they’re making them until the uncomfortable questions about what the ROI was for $10 million in AI spend.

With that being said, we want to save you from having uncomfortable conversations. At least around your AI return on investment. So in the following paragraphs, we’ll cover the top 5 mistakes folks are making and how you can avoid them.

Measuring time saved instead of value captured

This is the most common, and most expensive, mistake. Time savings have become the default success metric for enterprise AI, and it’s the wrong one. Let’s take a look at why.

Our research found that 49% of enterprises report saving two to four hours per employee per week. Another 29% report four to six hours. Yes, those are real productivity gains, but here’s where the math breaks. Only about half of that saved time was converted into measurable business value.

The rest, roughly 1.8 hours per employee per week, disappears into organizational friction. Tasks like manual validation of AI outputs. Dashboards that show insights without connecting to action workflows. Approval queues that sit between an AI recommendation and the decision it should trigger.

Only 5% of enterprises convert 75% or more of saved time into captured value. That means for most organizations, AI is generating time that nobody is accounting for and nobody is reinvesting. Multiply 1.8 leaked hours by the number of employees using AI weekly, and the unrecaptured value becomes staggering.

The fix requires specificity. Every AI deployment should have a defined capacity reinvestment target before going live. Either more transactions processed per analyst, faster compliance cycles, or shorter customer response times, etc. Without that target, time savings show up in employee surveys but never reach the P&L.

Mistaking fast payback for structural impact

Early AI wins are seductive. A copilot deployment generates visible productivity improvements in weeks. Or a summarization tool compresses research time by 30%. These quick payback stories make excellent internal case studies. They also create a false sense of arrival.

Our research reveals the trap. Of the enterprises we surveyed, 42% sit in the 10-20% ROI range. Comfortable, but structurally unchanged. Meanwhile, cost reduction correlates most strongly not with tool adoption but with workflow automation. And while 80% of enterprises report at least a 10% operational cost decrease, only 6.7% achieve 40% or more.

The distinction that matters is between assistance and automation. Assistance means AI helps humans work faster. Automation means AI executes workflow steps. Payback period measures the first. Structural ROI requires the second.

A copilot that saves an analyst two hours per week is assistance. An AI system that automatically routes compliance exceptions, validates data against regulatory requirements, and triggers downstream actions without waiting for human interpretation is automation. The economics are fundamentally different.

Treating adoption as success

High adoption metrics feel like validation. They’re among the most dangerous vanity metrics in enterprise AI. And the benchmark shows why.

Adoption is broad. Roughly 85% of enterprises report that at least a quarter of employees use AI weekly. But depth is thin. Only 12.5% report usage above 75%, and genuine workflow embedding, where AI operates inside the process rather than alongside it, remains rare at roughly 12%.

The implication is counterintuitive. Without execution paths, higher adoption actually increases activity without improving returns. More people using AI means more outputs generated, more summaries created, more recommendations surfaced. But if those outputs sit in email threads and slide decks rather than triggering system actions, adoption creates noise without creating value.

Production-ready AI is AI that operates within business processes, not adjacent to them. Which means you can track adoption as a hygiene metric, not a success metric. The question that matters isn't how many people use AI. It’s how many workflows have AI embedded in their execution path, closing the loop between output and action.

Staying stuck in pilot metrics

This is what we call the maturity trap. And it even catches organizations that are doing almost everything right. The pattern is predictable. A team launches an AI pilot. They define success around time saved, error reduction, and task completion rates. The pilot succeeds and the program scales.

That is until new use cases are added. Adoption grows but then ROI flattens. Not because AI stopped delivering value, but because the measurement framework stopped evolving. The reality is, primary value drivers shift across maturity stages.

Pilot-stage organizations optimize for risk reduction and cost control. Scaled organizations should be measuring revenue growth and innovation velocity. Industrialized programs should be tracking structural cost changes, customer experience transformation, and competitive positioning. But most organizations carry their pilot metrics into every subsequent stage, measuring a scaled program with the same yardstick they used for a three-month proof of concept.

The strongest driver of executive satisfaction with AI isn't speed, throughput, or cost reduction. It’s quality improvement. Yet quality, measured as defect rates, rework frequency, audit pass rates, and escalation volumes, rarely appears as a first-class metric in enterprise AI dashboards.

The result is a perception gap. AI is delivering value that the measurement framework can’t see. Leadership concludes that ROI has plateaued. In reality, the metrics have plateaued while the opportunity has expanded.

Accepting "good enough" ROI

The final mistake is the quietest. It’s the decision to stop pushing for more. The 10-20% ROI range feels reasonable. It justifies continued investment. It keeps the program funded. And for 42% of enterprises in the benchmark, it’s exactly where they have settled. But the gap between the median and the top 7% isn't an incremental improvement. It is a structural divide.

The top 7% achieve 40% or higher ROI. They average approximately 63% workflow automation and 71% value conversion. They produce roughly 4.25 measurable value hours per employee per week. They maintain quality scores of 9 or above on a 10-point scale and satisfaction ratings of 9 to 10. And critically, they show early tool consolidation and embed AI outputs directly into systems of action.

Laggards, by contrast, convert 30% or less of AI-generated value, operate with low automation and high tool sprawl, score 6 or below on quality, and leave outputs stranded in dashboards and reports.

The difference between the middle and the top isn't budget, headcount, or model sophistication. It’s execution discipline. Quality instrumentation that measures reliability, not just speed. Portfolio governance that prevents tool sprawl from fragmenting the value chain. And deliberate reinvestment of reclaimed capacity into higher-value work.

Closing the execution discipline gap

Menlo Ventures estimates that enterprises spent $37 billion on generative AI in 2025, and that spending is accelerating. The question boards will increasingly ask isn't "are we investing in AI?" but "what have those investments changed about how we operate?"

Organizations still measuring adoption and time savings will struggle to answer. Organizations that have shifted will have the data to justify continued investment and the architecture to compound returns.

The five mistakes we covered share a common root. Companies treat AI as a tool adoption challenge rather than a workflow transformation program. But closing the distance between AI output and business action is entirely within your organization's control.

So which route are you going to choose?

The findings in this blog were drawn from research across 255 enterprise leaders. See the complete benchmark data, correlation analysis, and action framework in the full Enterprise AI ROI: 2026 Benchmarks report.

.png)