AI ROI: 2026 Benchmarks

Download Report

The all too common retail AI implementation story goes like this. A retailer identifies a high-value use case, typically demand forecasting or inventory optimization. They spend three months on vendor selection. Six months on data readiness and integration work. Another six months on model training and validation. Two months on change management and training. And then they go live, 17 months later, into a market that's already moved.

The competitors they were trying to catch have had three product cycles. The AI model is trained on data that predates several significant demand shifts. And the organization is exhausted from the implementation effort before it's had a chance to capture any value.

This pattern is so common it's become the accepted cost of enterprise AI adoption. It shouldn't be. The 18-month implementation timeline for inventory intelligence isn't a technical constraint. It's an architectural one. And fast AI deployment is possible when you build on an architecture designed for it rather than retrofitting AI onto a traditional enterprise data stack. Which is what we’ll cover in the paragraphs below.

The 18-month implementation timeline has become the standard for a notorious reason. Traditional AI deployments in retail are architected around centralized data. Before an AI system can do anything useful with inventory data, all of that data needs to be in the same place, in the same schema, with the same definitions. That means data migration. That means schema harmonization across ERP, WMS, POS, and supplier systems.

That also means months of data quality remediation work before any AI model sees a single training record. And that means a sequence of project dependencies that can't be parallelized and can't be accelerated beyond the rate at which legacy systems can be rationalized.

The alternative architecture, the one that produces functional inventory intelligence in days rather than months, starts from a different assumption. Data doesn't need to be centralized before it can be intelligent. It needs to be connected. A knowledge fabric that creates a semantic layer across existing systems allows AI models to operate on data in place, without requiring migration.

A modular building-block architecture allows inventory use cases to compose from pre-built components rather than being custom-built from scratch. And an enterprise integration layer with pre-built connectors for SAP, Salesforce, Netsuite, and major POS systems eliminates the months of integration work that typically precede any AI deployment.

The skepticism about fast AI deployment in enterprise retail is reasonable. The claim that meaningful inventory intelligence can be operational in days sounds like a proof-of-concept pitch, not an enterprise delivery promise. The distinction matters.

A proof of concept that demonstrates a model can predict demand against a cleaned sample dataset isn't inventory intelligence. It's a science fair project. Enterprise inventory intelligence is a system operating in production on real data from real systems, handling real exception cases, and producing decisions that operations teams actually trust.

The mechanism that makes genuine fast enterprise deployment possible is the blueprint architecture. Rather than building each inventory solution from scratch, Unframe's blueprint system provides orchestration specifications for proven inventory use cases, pre-tested patterns for demand forecasting, replenishment automation, reconciliation, and vendor compliance monitoring that have already been validated in production retail environments.

The integration connectors are pre-built. The data extraction models for common document types are pre-trained. AI agents and workflow automation building blocks are modular and composable. The work that remains is configuring this pre-built architecture to the specific environment, data sources, and operational parameters of the retailer. That's a days-to-weeks exercise, not a months-to-years one.

The retail services chain that achieved 97% reconciliation accuracy across hundreds of locations didn't go through an 18-month implementation. The Fortune 50 CPG company that recovered $35M in trade spend by compressing promotion planning from days to minutes didn't build their solution from scratch. Both deployed on a pre-built architecture that was configured to their environment rather than engineered from the ground up.

The ROI calculation for AI deployment timelines is typically framed as a cost question. Faster deployment means lower implementation costs. That's true but understated. The more significant financial argument is the opportunity cost of delayed intelligence. Global inventory distortion costs retail $1.77 trillion annually.

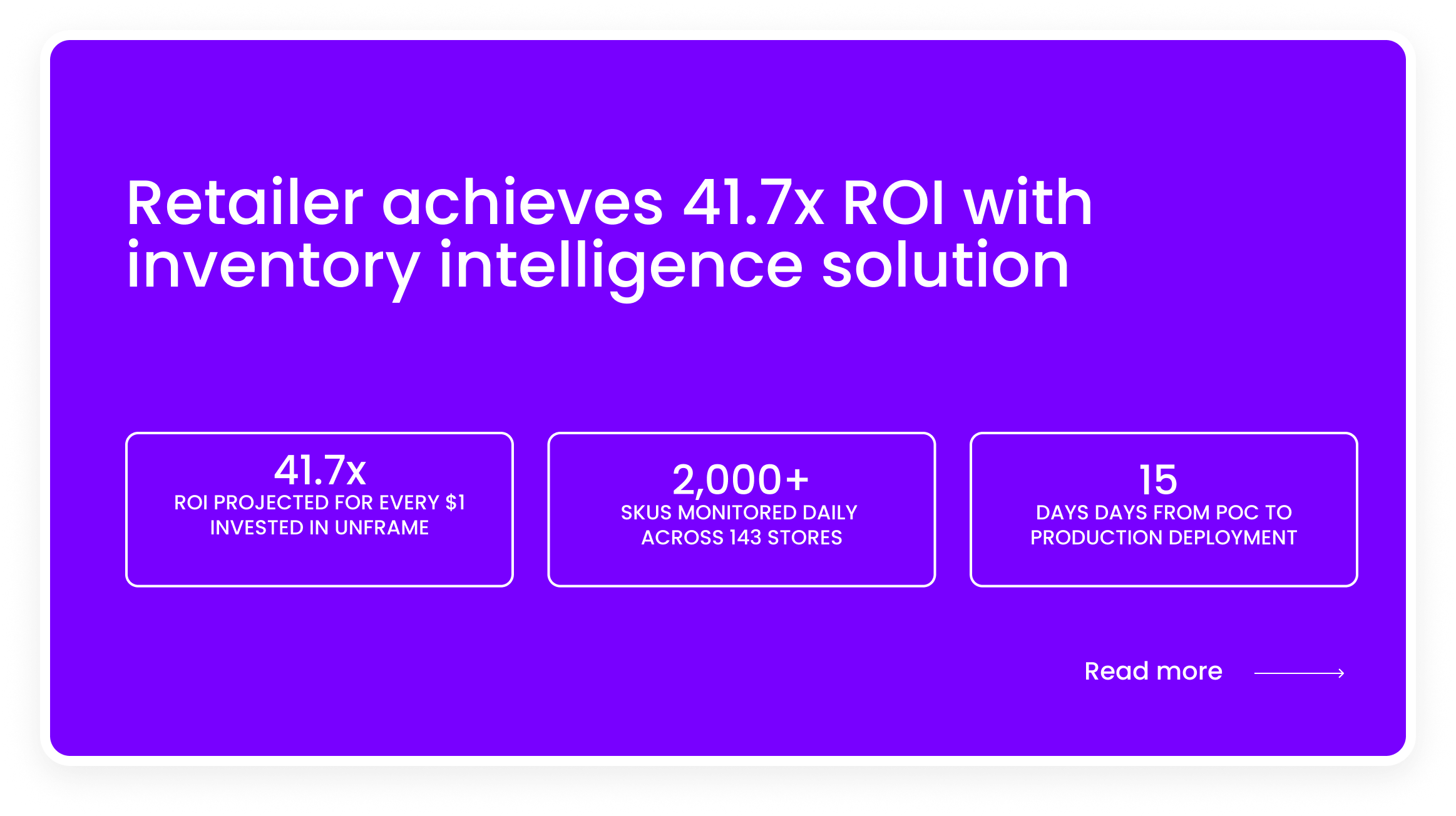

Let’s take a look at the numbers in a real world scenario:

The competitive dynamic compounds this. Retailers implementing AI see stockout reductions of 35% and excess inventory drops of 28% compared to pre-AI baselines. The retailers who've been running AI-powered inventory systems for 18 months while their competitors were implementing don't just have better numbers. They've generated 18 months of operational learning that makes their systems progressively more accurate.

The traditional argument for long, careful AI implementations is risk management. A phased, thorough approach reduces the chance of deploying a system that makes bad decisions at scale. That argument has merit in some contexts. In retail inventory, it has a hidden assumption that undermines it: that the status quo is risk-neutral. It isn't.

Operating without inventory intelligence in a market where your competitors are deploying it isn't a conservative risk position. It's an active risk position. The 69% of customers who switch retailers after encountering a stockout aren't waiting for your implementation project to finish.

The suppliers whose compliance failures are creating ripple effects through your supply chain aren't pausing their behavior while you complete data readiness work. The risk of moving slowly isn't zero. It's just less visible than the risk of moving fast, which makes it easier to underweight in the project prioritization conversation.

Fast deployment with modular architecture, pre-built connectors, and a blueprint-driven configuration process doesn't eliminate deployment risk. But it concentrates the risk into a short window and produces operational feedback quickly enough to course-correct before small problems become expensive ones.

That's the actual conservative choice. The organization that's been running production inventory intelligence for 90 days has identified and resolved its edge cases before the organization that spent 90 days in data readiness has written its first SQL transformation.

If your inventory intelligence initiative is currently measured in quarters rather than weeks, the question worth asking your implementation team isn't "how do we accelerate?" It's "what in our current architecture requires us to move this slowly, and does it have to?"

The answer to that second question is usually more negotiable than the project plan suggests. Click here to see how Unframe delivers production-ready inventory intelligence without the implementation marathon.