AI ROI: 2026 Benchmarks

Download Report

There's a pattern we've been seeing play out lately and it’s about time to address it. A company decides to get serious about AI. Leadership greenlights a substantial budget. The team gets excited. Then someone raises their hand and asks the obvious question, "But what about our data?"

And just like that, the AI initiative becomes a data cleanup project. Months pass. Budgets shift. The original business case gathers dust while engineers wrestle with schema migrations and deduplication logic. By the time anyone looks up, the competitive window has closed.

RAND Corporation research shows that over 80% of AI projects fail, which is twice the failure rate of IT projects that don't involve AI. The knee-jerk reaction is to blame the technology. But the real culprit is us. We've convinced ourselves that enterprise data integration must be complete before AI can begin.

That assumption is killing more AI initiatives than any technical limitation ever could.

Every enterprise has fragmented data. This isn't a failure of planning or discipline. It's the natural consequence of how businesses actually evolve.

Marketing adopted Salesforce five years ago. Finance runs on an ERP system that predates half the current workforce. Last quarter's acquisition brought three legacy platforms that nobody has figured out how to integrate yet. Support tickets live in Zendesk, contracts in SharePoint, and critical institutional knowledge in the email threads of employees who left two jobs ago.

The conventional wisdom says to fix this fragmentation before attempting anything sophisticated. Standardize the formats. Deduplicate the records. Build a master data repository. The Informatica CDO Insights 2025 survey found that 43% of Chief Data Officers cite data quality and readiness as their top obstacle to AI success.

But here's what nobody talks about. That cleanup project everyone just prioritized has no finish line. Schemas change. New tools get adopted. Departments spin up their own solutions. The data hygiene initiative becomes permanent overhead rather than a phase you complete. And while you're waiting for perfect data, competitors with messier data and better architectures are shipping AI solutions that actually work.

The traditional response to scattered data is centralization. Build a data lake. Architect a warehouse. Launch a massive ETL initiative that will finally bring everything into one queryable repository.

We've watched these projects unfold for years. They almost always disappoint.

The fundamental problem is that consolidation assumes data can hold still long enough to be centralized. It can't. Business moves faster than integration timelines. By the time your data warehouse reaches production, new SaaS tools have been adopted, a department has spun up its own analytics instance, and an acquisition has introduced three systems that weren't in the original architecture.

ETL pipelines are brittle by design. They break with every schema change, every new field, every upstream modification. Maintaining them becomes a full-time job for engineers who should be building something valuable. And the "single source of truth" you worked so hard to create? It becomes just another source competing with the systems people actually trust.

The cruelest irony is that centralization often accelerates fragmentation. The warehouse lags behind operational systems. Business users route around it. Shadow analytics proliferate. You end up with more silos than you started with, plus the maintenance burden of a centralized system that nobody fully trusts.

The enterprises getting real value from AI aren't waiting for clean data. They're using architectures that work with fragmented data instead of against it.

The shift is conceptual as much as technical. Instead of asking "How do we consolidate all this data?" the question becomes "How do we make AI fluent in data wherever it already lives?" This reframe matters because it stops treating fragmentation as a problem to be solved before AI can begin — and starts treating it as the normal condition that AI architecture must be built to handle.

The companies that have made this shift share a common pattern: they stopped investing in data preparation as a prerequisite and started investing in intelligence infrastructure that works with data as it actually exists — messy, distributed, and constantly changing. The result isn't just faster AI deployment. It's AI that reflects how the business actually operates, not a sanitized version of it that's already out of date by the time it's been cleaned.

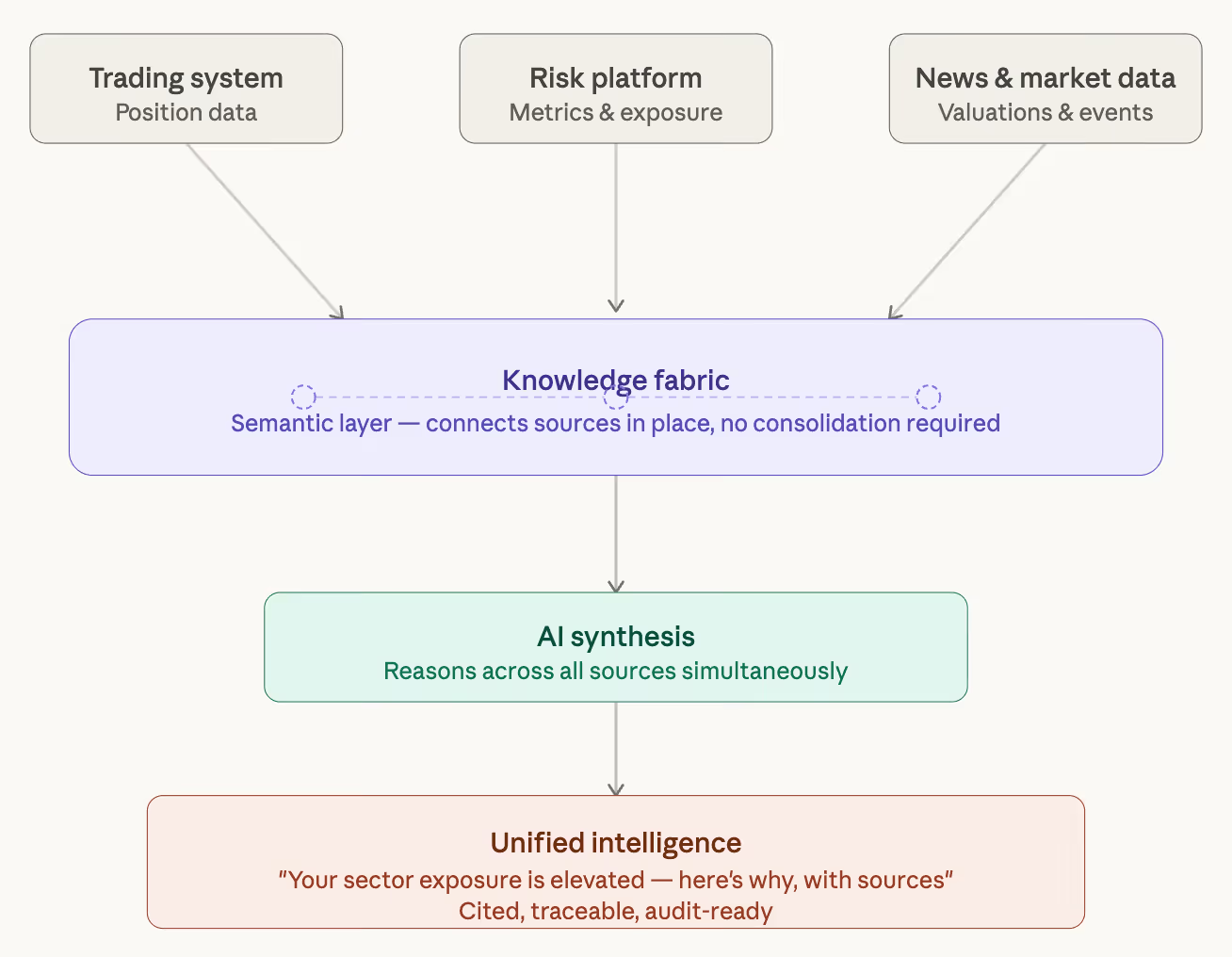

This is the principle behind what's increasingly called a knowledge fabric — a semantic layer that connects existing sources without requiring them to move. Rather than centralizing data into a warehouse, a knowledge fabric creates relationships and context across systems. It extracts fields, entities, clauses, tables, numbers, and narratives across structured, semi-structured, and unstructured documents—then normalizes and validates that data against business logic, flagging exceptions only where needed. It understands that the customer record in your CRM, the contract in your document management system, and the support ticket in your service platform are all connected, even if they've never been formally integrated. And it doesn't stop at connection—it surfaces higher-order intelligence: patterns, obligations, risks, trends, and KPI insights that would otherwise stay buried across silos.

The technical elegance is that the fabric operates at the source. No duplication, no migration, no bottleneck where everything flows through a central repository. Governance happens where data lives, not retrofitted after a consolidation project that may never finish.

More importantly, the fabric adapts as the enterprise evolves. New systems get connected rather than queued for integration. Schema changes get absorbed. Acquisitions become queryable without multi-year harmonization efforts. The architecture bends with the business instead of demanding the business freeze while infrastructure catches up.

Here's the real unlock: the goal was never unified data. The goal was unified intelligence—the ability to get coherent, contextual answers from incoherent sources.

Consider what this looks like in practice. A portfolio manager needs to understand sector exposure. The answer requires combining position data from the trading system, risk metrics from a separate platform, and recent news that might affect valuations. Traditional enterprise search would return documents from each system and leave the synthesis to the human. AI powered business intelligence built on a knowledge fabric delivers a single answer, grounded in data from all three sources, with citations the user can verify.

Or consider regulatory compliance. Demonstrating adherence requires connecting policy documents to implementation workflows to audit trails that prove execution. Without unified intelligence, this is a manual exercise repeated painfully before every examination. With it, the connections are always live, always queryable, always audit-ready.

The characteristics that matter are consistency and context. Answers reflect how the business actually operates, not generic model outputs that miss your terminology, processes, and constraints. Metadata and lineage travel with every response, making answers traceable and explainable. The system updates dynamically—a new document, a workflow change, a policy revision gets reflected immediately without rebuilding indexes or retraining anything.

For decades, the enterprise playbook has been clear: clean your data, then do interesting things with it. This sequence made sense when the only path to insight was structured databases that demanded conformity before they could function.

That era is over.

Modern AI architectures can reason over messy, heterogeneous, fragmented data. The same capabilities that let large language models understand natural language let enterprise AI understand the natural disorder of real business information. A knowledge fabric doesn't require pristine inputs. It builds context and relationships on top of whatever exists, connecting rather than consolidating, adapting rather than demanding perfection.

This isn't about lowering standards. It's about recognizing that AI can now handle the contextual heavy lifting that used to require humans to pre-clean and pre-structure everything. The preprocessing step that once took months or years can now happen dynamically, continuously, as data flows through systems that were never designed to work together.

What does this mean practically? It means you can stop waiting. Solutions that once required extensive data preparation now deploy in days. The business case you shelved because data wasn't ready can come off the shelf. The competitive advantage you've been deferring becomes available now.

The enterprises still treating data hygiene as a prerequisite for AI are asking the wrong question. Data is never clean enough. It's always fragmenting, always accumulating inconsistencies, always evolving faster than any cleanup initiative can keep pace.

The right question isn't "When will our data be ready?" It's "Why are we still using approaches that demand ready data in the first place?"

Increasingly, you don't have to.

Every AI solution Unframe delivers has its own Knowledge Fabric instance delivering unified intelligence from fragmented enterprise data. No consolidation project, no data hygiene prerequisite, no waiting. See how it works.

Tell us the use case. We'll show you what's possible - live, on your data, in days.