Overview

Scaling AI agents in the enterprise requires more than tools that can run individual workflows. You need platforms designed for governance, observability, and long-term operational control. It comes down to understanding the architectural capabilities that enable agents to operate reliably across complex systems at scale.

- Not all AI tools are built for enterprise-scale agent deployment

- A centralized control plane is essential for visibility and governance

- Pre-production testing and observability are critical for risk management

- Shared enterprise knowledge improves consistency and relevance of outputs

- Multi-model flexibility prevents vendor lock-in and supports strategic control

The difference between a platform that lets you build agents and one that lets you govern and customize agents within delivered solutions is where most evaluations go wrong.

The market for enterprise AI agent platforms is moving fast, and the vocabulary is outpacing the substance. Every vendor promises orchestration, governance, and scale. Few are specific about what that means in practice - and for a CIO signing off on enterprise-wide deployment, vague promises are not enough.

This guide is designed to cut through the noise. It outlines the capabilities that actually matter when evaluating an enterprise AI agent platform, the questions worth asking vendors, and the architectural principles that separate platforms built for enterprise realities from those that merely look the part.

Why generic AI tools are not enterprise ai agent platforms

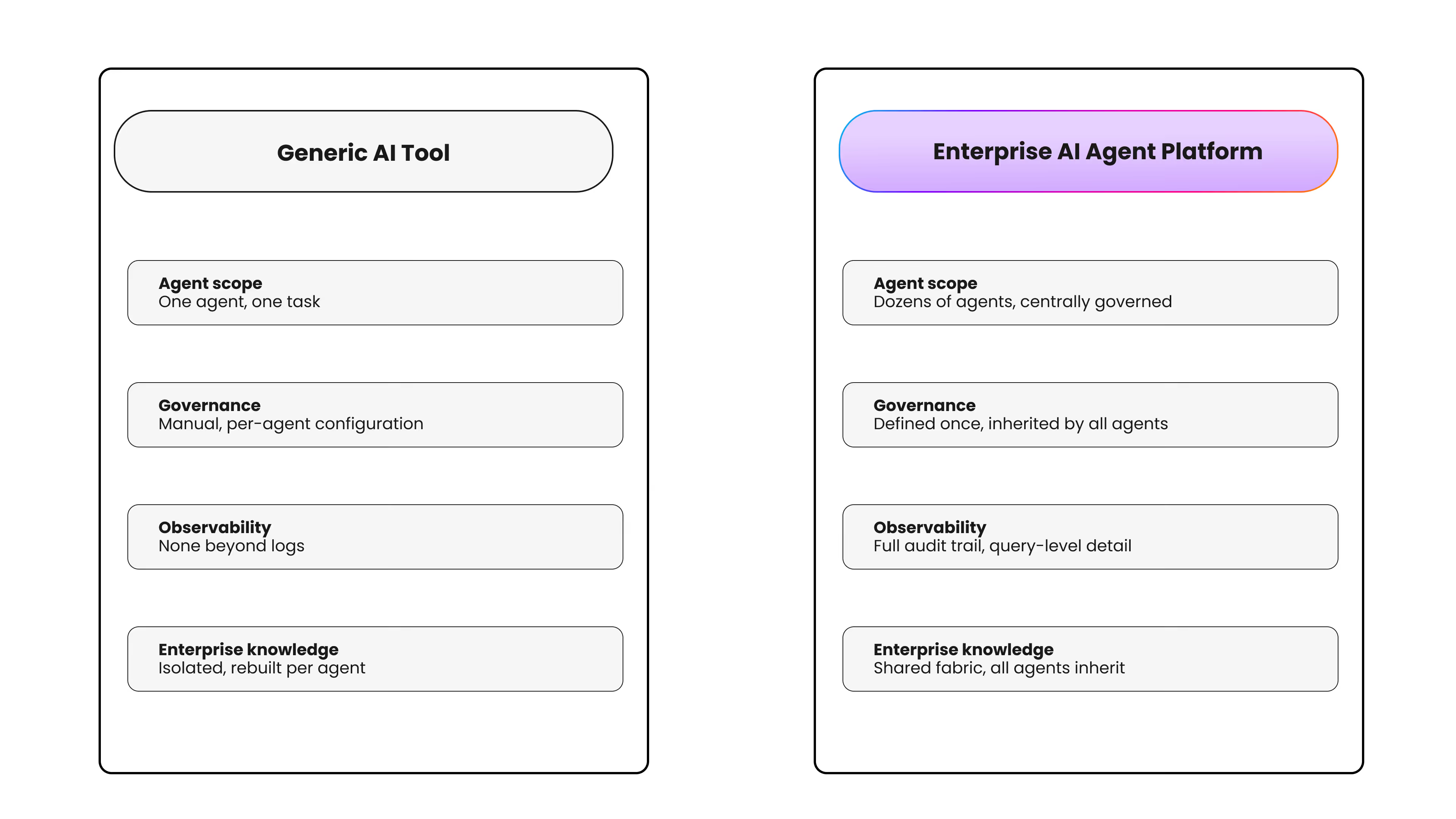

The first distinction worth making is between tools that can run an AI agent and platforms that can govern many agents operating across complex enterprise systems.

Most AI tooling was designed for the former. It handles individual tasks well in controlled, low-stakes environments. But enterprise deployments look nothing like that. They involve dozens of agents operating concurrently across finance, operations, compliance, customer service, and HR - each touching different systems, different data, and different user populations. The governance, observability, and integration requirements of that environment are categorically different.

A platform evaluation that does not start from this distinction will end up selecting a tool that works in the demo and struggles in production.

Six capabilities that define a true enterprise AI agent platform

1. A centralized control plane

Enterprise AI at scale requires a single place where agents are created, configured, governed, and monitored. Without this, organizations end up with fragmented agent management - different teams using different tools, with no unified visibility into what is running, what it is doing, or whether it is operating within policy.

The right platform gives administrators a central control surface that covers the full agent lifecycle: from initial configuration and testing through to production deployment and ongoing observability.

Ask vendors: Where do I go to see every agent running in my environment, and what it is doing right now?

2. Pre-production testing and validation

One of the highest-risk moments in enterprise AI deployment is moving an agent from a test environment into production without adequate validation. Agents that behave correctly in isolation can behave unexpectedly when connected to live systems and real data.

A serious platform provides structured, interactive testing capabilities - allowing teams to simulate agent behaviour, evaluate how agents query and interpret data, and validate outputs before any production exposure. This is not optional. For regulated industries in particular, it is a prerequisite.

Ask vendors: How do I test an agent's behaviour against real enterprise data before it goes live - and what does that process look like?

3. Deep observability and audit capability

Governance without observability is not governance — it is policy on paper. When something goes wrong with an agent in production (and at scale, something will), the platform needs to provide a clear, complete record of what the agent did, what data it accessed, what systems it interacted with, and what decisions it made.

This matters for internal accountability. It also matters for regulatory compliance in industries where data access and automated decision-making are subject to audit.

Ask vendors: Can you show me a complete audit trail for a specific agent action - down to the query level?

4. Policy-driven governance built into the architecture

The most resilient governance model is one where policies are defined at the platform level and inherited by every agent automatically — rather than configured manually for each deployment. Data access permissions, system interaction guardrails, and operational constraints should be set once and enforced consistently across the agent fleet.

Platforms that require governance to be configured per-agent do not scale. And platforms that bolt governance on after the fact create gaps that grow with every new deployment.

Ask vendors: How are governance policies defined - and how are they enforced across all agents without per-agent configuration?

5. Shared enterprise knowledge as a foundation

Agents that operate in isolation from enterprise context produce generic, inconsistent outputs. The platforms worth evaluating are those that ground agents on a shared, structured layer of enterprise knowledge - connecting agents to the data, processes, terminology, and institutional context that makes their outputs actually relevant to the business.

This shared knowledge layer should be something teams can shape and extend in natural language - not something that requires engineering effort every time the business context changes.

Ask vendors: How do agents in your platform access and share enterprise knowledge - and how do we keep that knowledge current?

6. Multi-model architecture with centralized model governance

Model selection is a strategic decision that involves trade-offs across cost, performance, capability, and risk. An enterprise AI platform should support multiple model providers - giving the organization flexibility as the model landscape evolves - while keeping model governance centralized.

This means the CIO or platform team can define which models are available for which use cases, enforce cost controls, and swap providers without rebuilding agent configurations from scratch. Vendor lock-in at the model layer is a serious long-term risk.

Ask vendors: How do you handle multi-model deployments - and what does model governance look like from an administrative perspective?

The customize vs. build question

Some organisations will consider building this infrastructure internally. It is worth being clear about what that involves. A centralized agent control plane requires engineering investment in agent lifecycle management, observability infrastructure, policy enforcement systems, integration layers, knowledge management, and model routing - all of which need to be maintained and evolved as the agent landscape changes.

For most enterprises, the relevant question is not whether to build or buy, but whether the internal engineering investment required to build and maintain this infrastructure is a better use of resources than starting from a delivered, production-grade solution and customizing it to fit your business.

The platforms that make a strong case are those that deliver tailored solutions out of the box and then give teams governed control to customize - reducing time-to-deployment for new agents significantly, from months to hours, because the shared infrastructure, integrations, and governance guardrails already exist.

A framework for your evaluation

When assessing platforms, score each against these dimensions:

What the right platform makes possible

The right enterprise AI agent platform does not just make it easier to run agents - it makes it possible to run them responsibly, at a pace the business can sustain. It gives the CIO the visibility to answer the board's questions, the governance infrastructure to satisfy the compliance team, and the operational leverage to keep delivering new agent capabilities without compounding technical debt.

But the platforms that truly stand apart are those that don't hand your teams a blank canvas. They deliver a tailored solution - and then give your teams the governed customization layer to make it theirs.

That combination - delivered solutions, governed customization, and observability in a single platform - is what separates enterprise-grade from everything else.

Connect with an expert to discover how Unframe Agent Studio provides the governed customization layer on top of delivered enterprise AI solutions. So your teams get flexibility without rebuilding from scratch.

.png)