The AI conversation inside most enterprises has shifted. The question is no longer “which use case should we try?” It's “how do we run dozens of use cases without duplicating effort, fragmenting data access, and accumulating integration debt?”

That shift matters because of what it reveals about the current state of enterprise AI investment. Across a benchmark study of 255 enterprise leaders, 58% now run six or more AI tools and platforms. Nearly one in ten runs more than ten. The expectation is intuitive. More tools should mean more capability, more coverage, more ROI. The data says otherwise.

Tool sprawl is highest among low-ROI AI programs. Enterprises with fragmented tool portfolios consistently show lower automation rates, lower value conversion, and enterprise AI ROI suppressed by approximately 20 to 30% compared to consolidated peers. This finding doesn’t mean every enterprise should collapse its AI stack to a single vendor. But it does mean that the assumption underlying most enterprise AI strategies, that each new tool adds incremental value, breaks down past a certain threshold.

The broader market confirms the pattern. A TechCrunch survey of 24 enterprise-focused VCs found that an overwhelming majority predicted enterprises will concentrate AI spend in 2026, investing more dollars through fewer vendors as CIOs and CFOs demand measurable returns rather than experimental coverage. Understanding where the consolidation threshold sits and what drives the enterprise AI ROI compression is essential for any leader evaluating how their AI portfolio is performing.

The scope of enterprise AI tool sprawl

Tool proliferation is the default outcome of how enterprises adopt AI. Most AI programs start organically. A team in compliance pilots a document processing tool. Marketing adopts a content generation platform. Finance deploys a forecasting model. Ops experiments with workflow automation. Each tool solves a real problem for a specific team. And each one introduces its own data connectors, authentication model, governance requirements, and integration surface.

The benchmark quantifies the result. Nearly half of enterprises (49%) use six to ten AI tools and platforms. Another 9% use more than ten. Use case count correlates directly with tool count as the number of live AI applications grows, the number of platforms supporting them tends to grow in parallel. This isn't surprising. What’s surprising is that the correlation between more tools and better outcomes is weak to nonexistent once you control for how well those tools are integrated.

The report frames the dynamic clearly. Portfolio scale forces standardization. Organizations running many use cases need shared components, shared metrics, and shared controls. Without explicit consolidation or an orchestration strategy, each new use case adds a new tool, and each new tool adds a new layer of integration debt that compounds across the portfolio.

How tool sprawl suppresses ROI

Tool sprawl specifically causes duplicated controls across security, privacy, and approval workflows, because each tool requires its own governance layer. But the ROI impact of tool sprawl isn't a single failure point. It emerges from three converging signals in the benchmark data.

The first signal is the proliferation pattern. As use cases increase, tools proliferate unless the organization has an explicit consolidation strategy. The correlation between live use case count and tool count confirms that most enterprises are adding platforms at roughly the same rate they're adding applications. Each new platform carries its own governance overhead, its own API integration requirements, and its own data access patterns. The cumulative effect is an AI integration landscape that grows more complex with every deployment.

The second signal connects tool fragmentation to automation and value capture. Value capture correlates most strongly with workflow automation. Workflow automation, in turn, depends on how well AI tools connect to the systems where work happens. Fragmented tool portfolios slow that connection. Each tool operates with its own data pipeline, its own output format, and its own handoff model. The result: lower automation levels, longer time to production for each new use case, and value capture rates that stall below what the underlying AI capability could deliver.

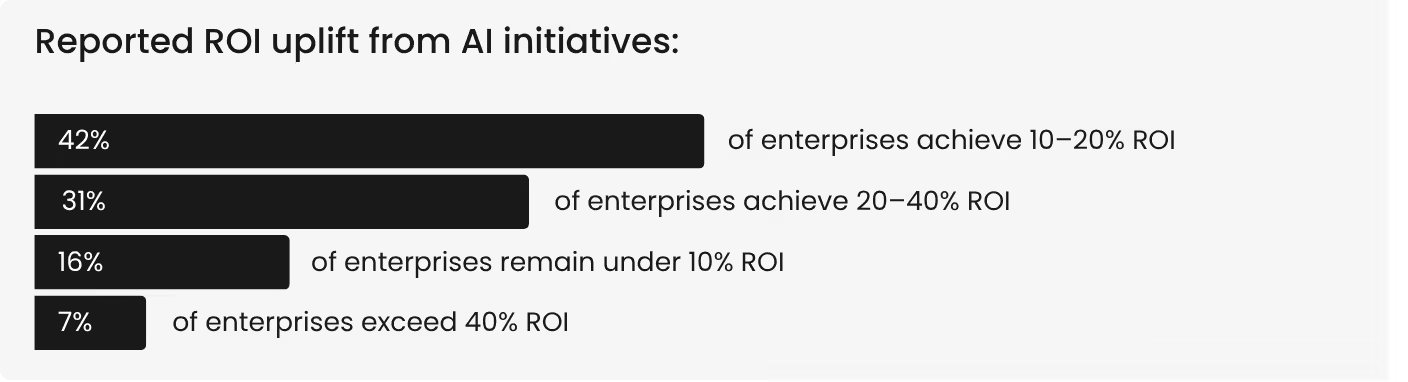

The third signal shows up in the ROI distribution itself. Most enterprises cluster at 10 to 40% ROI. Only 7% exceed 40%. When the benchmark compares high-ROI leaders against the broader population, one pattern is consistent. Leaders show early tool consolidation and deep integration across a smaller number of platforms, while lower-ROI programs show high sprawl with limited coordination between tools.

The effective ROI delta between consolidated and fragmented portfolios consistently lands in the 20 to 30% range. Tool fragmentation isn't the only driver of that gap, but it's a structural amplifier that makes every other conversion problem worse.

What high-ROI enterprises do differently

The enterprises that break through the 40% ROI threshold share a set of portfolio management disciplines that directly counter the sprawl pattern. For example, high-ROI leaders close the loop between AI output and system action. Their AI doesn’t produce recommendations that sit in dashboards waiting for human action. It feeds into execution systems, triggers workflow steps, and produces measurable changes in business metrics.

This closed-loop execution requires deep integration between AI tools and the operational systems they serve, which is structurally easier to achieve with fewer, better-integrated platforms than with many loosely connected ones.

These organizations also treat reclaimed capacity as a managed asset. When AI saves time, the saved hours are allocated to defined outcomes. They instrument quality alongside productivity, tracking not just how much AI produces but how reliable that output is across use cases. And they run AI as a portfolio with many use cases and fast development cycles, but with enough shared infrastructure to avoid duplicating effort across every deployment.

The contrast with laggards is specific. Lower-ROI programs show meaningful time savings without operational closure. The AI generates value that never reaches a business metric. Automation remains limited because fragmented tools cannot execute end-to-end workflows. Tool sprawl increases complexity faster than capability.

Quality improvements are inconsistent because each tool has its own evaluation criteria. The defining difference isn't ambition or investment level. It's the ability to close the loop between insight and outcome, and that ability depends directly on how well the AI tool portfolio is integrated.

This is where the choice of an enterprise AI platform becomes strategic rather than tactical. A modular AI platform built from shared AI building blocks, where use cases share data connectors, governance controls, and integration infrastructure, structurally avoids the sprawl tax that fragmented portfolios accumulate. It's not about choosing a single tool. It's about choosing an architecture that scales without compounding integration debt.

The consolidation imperative

More tools do not mean more ROI. The benchmark data makes this unambiguous. Performance correlates with execution discipline and integration depth, not with platform count. The enterprises exceeding 40% ROI are not the ones with the most AI tools. They're the ones with the most integrated AI portfolios, where shared infrastructure reduces governance overhead, accelerates time to production, and enables the closed-loop execution that converts AI output into measurable business outcomes.

For enterprise teams evaluating their AI strategy, the tool sprawl finding reframes the conversation. The question isn't “which new AI tool should we add?” It's “how do we get more value from the platforms we already have, and where does consolidation create more ROI than expansion?”

The organizations that answer that question first will compound returns. The ones that keep adding tools will keep compounding costs.

These findings are drawn from research across 255 enterprise leaders. See the full correlation data between tool count, automation levels, and ROI outcomes in the Enterprise AI ROI: 2026 Benchmarks report.

.png)