Organizations have deployed large language models and AI-powered search tools, but the most common outcome is AI that sounds confident but lacks the business context to be trustworthy. Gartner now predicts that 60% of AI projects will be abandoned because they are unsupported by AI-ready data. And the RAND Corporation found that more than 80% of AI projects fail overall, with insufficient data quality consistently among the top root causes.

The missing piece isn’t better models, bigger context windows, or more sophisticated prompting. It’s a knowledge fabric. Essentially a knowledge fabric is an architectural layer that makes AI fluent in how your business actually works by connecting structured data, unstructured documents, conversations, workflows, and institutional knowledge into a semantically linked, continuously learning foundation that grounds every AI interaction in real business context.

Yes, that was a mouthful. But there was no other way to say it. With that in mind, we put this guide together to explain what a knowledge fabric is, why adjacent technologies like RAG and knowledge graphs fall short on their own, and what enterprises should look for when building this critical context layer.

Why your AI lacks business context

Enterprise AI projects fail for a specific, repeatable reason that has nothing to do with model quality. They fail because the AI system doesn’t understand the business it’s supposed to serve.

Consider what happens when a portfolio manager at a financial services firm asks an AI assistant a seemingly straightforward question, "What is our exposure to the healthcare sector, and are there any compliance concerns I should know about?" Answering that question accurately requires synthesizing position data from the trading system, risk metrics from the compliance platform, regulatory updates from the legal repository, and relevant client communication history from the CRM.

The AI needs to understand that these data sources are related, that permission boundaries govern who can see what, and that the definition of "exposure" means something specific in this firm's operating context. A general-purpose large language model, no matter how capable, can’t do this. It lacks the business context.

This context gap is the root cause of enterprise AI hallucinations. Stanford HAI research demonstrated that even RAG-powered legal AI tools hallucinate 17 to 34% of the time on domain-specific queries. Which is down from 58 to 88% for general-purpose models but still far too unreliable for consequential business decisions. The problem isn’t the retrieval mechanism itself. It’s that retrieval without semantic understanding of business context returns fragments rather than intelligence.

Most enterprises have tried to solve this problem by investing in better data infrastructure. They build data warehouses, deploy data lakes, and more recently adopt data fabric architectures to integrate information across systems. These investments solve real problems around data movement, transformation, and governance.

But they don’t solve the context problem. A data fabric can ensure that your AI system has access to the right data. It can’t ensure that the AI system understands what that data means, how different data sources relate to each other, or what matters in the specific context of a business decision.

The result is what practitioners have started calling "context-starved AI". These are systems that have access to enormous volumes of enterprise data but can’t reason across it in ways that reflect how the business actually operates. The data is available. The meaning is not. And that’s the gap a knowledge fabric fills.

What a knowledge fabric actually is

A knowledge fabric is the semantic context layer between your enterprise data sources and your AI systems. Where a data fabric integrates and governs data movement across systems, a knowledge fabric goes further. It models the relationships, meaning, and business logic that connect disparate information into a coherent representation of how your organization works.

Think of it this way. Your enterprise generates enormous volumes of data across dozens of systems. Just consider your CRM records, contracts, support tickets, financial reports, compliance documents, Slack conversations, email threads, meeting notes, and operational logs. A data fabric makes this data accessible. A knowledge fabric makes it understandable.

It captures not just the data itself but the relationships between data points, the business rules that govern how information should be interpreted, the permission structures that determine who should see what, and the institutional knowledge that defines what terms and concepts mean in your specific operating context.

The concept builds on Gartner's data fabric architecture, which the firm named its number one strategic technology trend for 2022 and continues to recommend as the foundational data management approach for AI-ready enterprises. Gartner defines data fabric as an architecture that enables "frictionless access and sharing of data in a distributed data environment" and estimates it can reduce data management effort by up to 70%. But Gartner's own research reveals the limitation. Even with a data fabric in place, 63% of organizations still lack proper data management practices for AI. The data is integrated. The knowledge is not.

A knowledge fabric extends data fabric architecture with four additional capabilities that enterprise AI specifically requires.

Semantic linking. Connecting information across sources not just by identifiers or keys but by meaning. A customer complaint in Zendesk, a policy exception in the compliance system, and a contract amendment in the document repository may reference the same business event even though they share no common identifier. A knowledge fabric recognizes these semantic connections and makes them available to AI systems.

Contextual enrichment. Tagging every piece of information with metadata about its lineage, authorship, version, timestamp, and business domain so that AI responses are traceable and explainable.

Dynamic adaptation. Continuously updating the context layer as the business evolves, so that new documents, workflow changes, organizational shifts, and feedback signals are reflected in real time rather than requiring periodic reindexing or rebuilding.

Governed multi-hop reasoning. Enabling AI to traverse relationships across multiple data sources while respecting permission boundaries at every step, so that answers are both comprehensive and appropriately restricted.

No major analyst firm has formally defined "knowledge fabric" as a market category. The term sits at the intersection of data fabric, knowledge graphs, and enterprise AI grounding, which means organizations searching for this capability often evaluate point solutions in each of those adjacent categories without finding a unified approach.

That gap between what enterprises need and what the market has formally named creates both confusion and opportunity. The organizations that recognize what a knowledge fabric does, regardless of what vendors call it, gain a significant architectural advantage.

How it works: Multi-hop reasoning sequence

Why RAG and knowledge graphs are not enough on their own

The enterprise AI market offers several technologies that address pieces of the context problem. Retrieval augmented generation, knowledge graphs, and semantic layers each solve genuine challenges. But none of them, in isolation, delivers what a knowledge fabric provides.

RAG has become the default approach for grounding AI in enterprise data. The pattern is straightforward. When a user asks a question, the system retrieves relevant document chunks from a vector database and feeds them to the language model alongside the query. RAG meaningfully reduces hallucination rates, but has fundamental limitations that become apparent at enterprise scale.

It retrieves text fragments, not business context. It can’t reason across multiple data sources in a single query. It loses critical metadata like document lineage, permission boundaries, and temporal relevance during the chunking and embedding process. And it degrades on multi-step reasoning tasks where the answer requires traversing relationships that no single document chunk contains.

Knowledge graphs address the relationship problem that RAG misses. By modeling entities and their connections explicitly, knowledge graphs enable multi-hop queries that traverse complex relationship structures. But knowledge graphs require significant upfront modeling effort, struggle with unstructured content that does not fit neatly into predefined schemas, and typically serve structured data use cases rather than the full spectrum of enterprise information that includes documents, conversations, and workflows.

Semantic layers, increasingly positioned as the bridge between data and AI, solve yet another piece. They standardize business metrics and definitions so that AI systems interpret structured data consistently. A semantic layer ensures that "revenue" means the same thing regardless of which system the data comes from. But semantic layers are designed for structured, quantitative data. They don’t address the unstructured knowledge that Gartner estimates comprises 80 to 90% of all enterprise data.

A knowledge fabric integrates the strengths of all three approaches into a unified context layer. It uses semantic linking (like knowledge graphs) to model relationships across data sources. It incorporates retrieval capabilities (like RAG) to surface relevant information in response to queries. It standardizes business definitions (like semantic layers) to ensure consistent interpretation.

And it adds what none of these technologies provide independently. Which is continuous learning from business interactions, dynamic adaptation as the organization evolves, governed traceability across every AI response, and the ability to reason across both structured and unstructured data within a single contextual framework. The result is an AI system that does not just retrieve relevant information but understands how your business actually works.

Where knowledge fabric creates the most value

The business case for a knowledge fabric is strongest in environments where decisions depend on synthesizing information across multiple systems, where institutional knowledge is distributed across people and documents rather than codified in databases, and where AI accuracy has direct operational or regulatory consequences.

Financial services is the clearest example. Investment firms, banks, and insurance companies make decisions that require simultaneous access to structured portfolio data, unstructured research notes, regulatory filings, client communications, and compliance documentation. The knowledge required to make a sound investment decision, assess a risk exposure, or evaluate a compliance obligation does not live in any single system. It lives in the connections between systems.

A knowledge fabric that links portfolio positions to research analysis, regulatory requirements to compliance assessments, and client communications to contractual obligations provides the kind of cross-domain intelligence that makes AI genuinely useful rather than merely fast. This is why firms deploying knowledge fabric architectures for compliance and risk workflows report significant reductions in manual review time while improving both accuracy and audit readiness.

IT operations present a different but equally compelling use case. When a critical system incident occurs, the knowledge needed to diagnose and resolve it is scattered across monitoring dashboards, incident postmortems, runbooks, configuration databases, and tribal knowledge locked in the heads of senior engineers.

A knowledge fabric that connects monitoring alerts to historical incident patterns, resolution procedures, and configuration dependencies transforms incident response from a manual investigation into an AI-assisted synthesis.

Legal and compliance teams face a variation of the same challenge. Contracts, regulatory requirements, internal policies, and correspondence contain obligations and risks that must be tracked across time, jurisdictions, and organizational boundaries. The volume makes manual tracking impossible at scale, but the consequence of missing a critical obligation makes AI accuracy essential.

A knowledge fabric that links contractual terms to regulatory requirements, internal policies to compliance assessments, and extracts structured intelligence from unstructured documents provides the foundation for compliance automation that auditors and regulators can trust because every insight traces back to its source.

The common thread is that knowledge fabric value compounds with organizational complexity. The more systems you have, the more unstructured data your teams produce, and the higher the stakes of AI-informed decisions, the greater the return on building a coherent context layer that makes all of that knowledge accessible and meaningful to AI.

How knowledge fabric ensures governance, security, and auditability

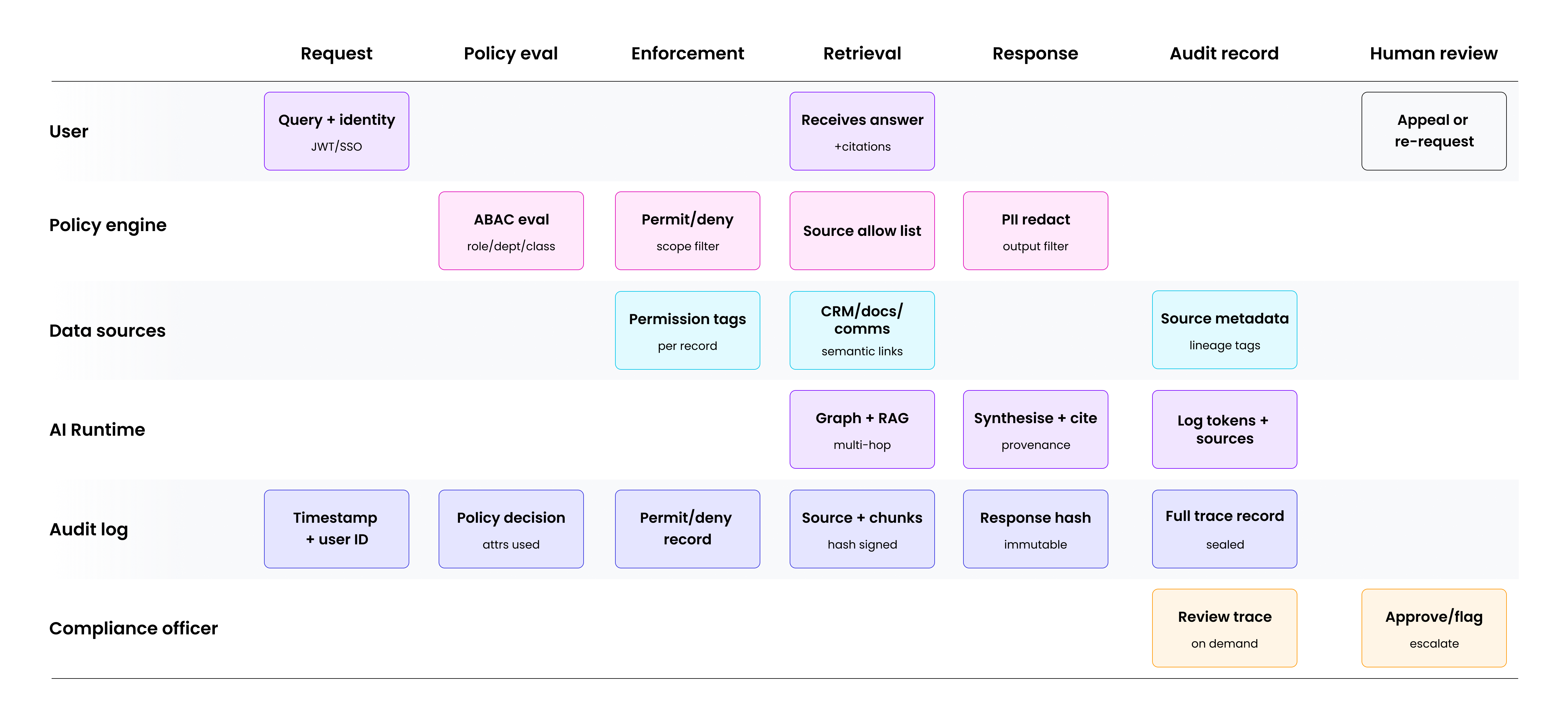

For enterprise use, accuracy alone is not enough - every AI interaction must be governed, permission-aware, and auditable. Below figure shows how a knowledge fabric enforces policy and captures provenance at every step of a query.

What to look for in a knowledge fabric platform

The market for enterprise AI context is fragmented across data fabric vendors, knowledge graph platforms, RAG infrastructure providers, and semantic layer tools. Because no analyst firm has defined "knowledge fabric" as a formal category, evaluating solutions requires asking specific architectural questions that distinguish a genuine knowledge fabric from a repackaged component. Just think of what we’ve covered thus far.

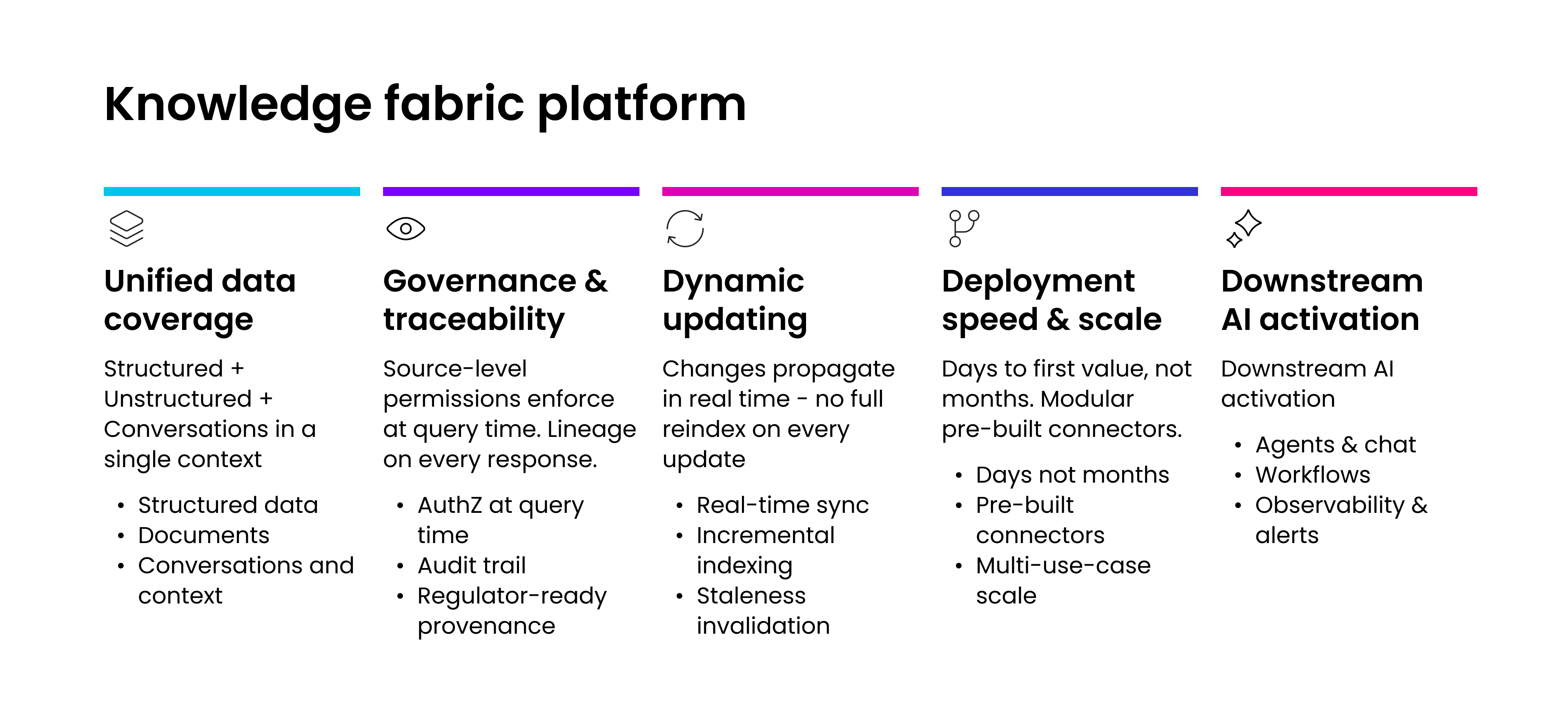

You definitely need to assess whether the platform handles both structured and unstructured data within a single context layer. Many vendors excel at one but not the other. A semantic layer that standardizes metrics but can’t reason over contracts is not a knowledge fabric. A document AI tool that extracts information from PDFs but can’t connect those extractions to operational data is not a knowledge fabric either.

The value of a knowledge fabric comes from its ability to link information across types and sources, so that an AI query can draw on structured data, unstructured documents, and conversational context simultaneously.

Next, don’t forget about evaluating governance and traceability. For any enterprise operating in a regulated industry or handling sensitive data, every AI response must be traceable back to its source materials with clear lineage. Ask how the platform handles permissions. Does it enforce source-level access controls at query time, or does it rely on a separate authorization layer? Ask about auditability. Can you demonstrate to a regulator exactly which data sources contributed to a specific AI output?

You’ll also need to look at how the platform handles change. Enterprise knowledge evolves continuously. Documents are updated. Organizational structures shift. Regulatory requirements change. A knowledge fabric that requires full reindexing when the underlying data changes is a liability in a fast-moving enterprise. Dynamic updating, where changes propagate through the context layer in real time, is essential for keeping AI responses current and trustworthy.

Assessing deployment speed and the ability to scale across use cases is also a must. If the platform requires six to twelve months of modeling and integration work before delivering value, it may be architecturally sound but operationally impractical. Managed AI delivery platforms that have gained traction in the enterprise market are deploying knowledge fabric capabilities in days rather than months by using modular building blocks and pre-built integration patterns.

Lastly, you’ll want to consider how the platform connects knowledge fabric to downstream AI capabilities. Context without action is academic. A knowledge fabric should feed directly into conversational agents that deliver answers at the point of decision, automated workflows that act on synthesized intelligence, and observability systems that surface insights proactively rather than waiting for someone to ask. The organizations extracting the most value from knowledge fabric treat it not as a standalone capability but as the foundation that makes every other AI investment more effective.

The enterprises that will pull ahead in the next phase of AI adoption are not the ones with the best models. They are the ones with the best context. Models are increasingly commoditized. Context is not. It is specific to your organization, your data, your workflows, and your institutional knowledge.

A knowledge fabric is the architectural layer that captures, connects, and continuously refines that context so that every AI interaction is grounded in how your business actually works. The question is not whether your enterprise needs one. It is how quickly you can build it.

To learn how Unframe is helping enterprise customers who need enterprise AI solutions fluent in their business, visit our page on knowledge fabric to discover how we can help you.